eWEEK content and product recommendations are editorially independent. We may make money when you click on links to our partners. Learn More.

2Democratized Data Access

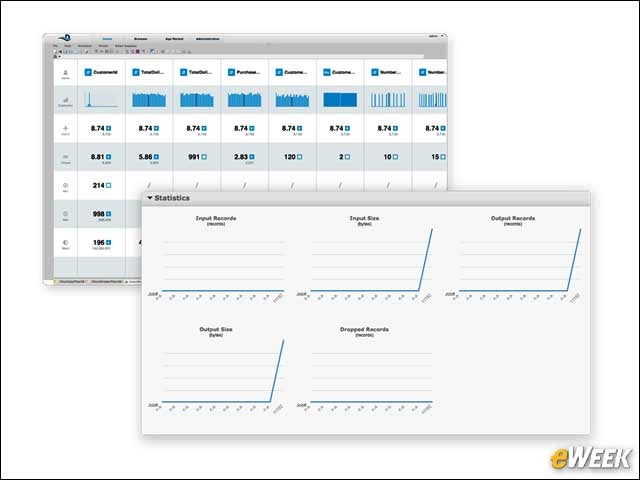

3Quality and Consistency

Visual data profiling enables users to spot data outliers early in the cleansing and analysis process, safeguarding downstream analysis from dirty data. Impact analysis allows you to understand who or what will be affected if a change is made in the data pipeline. Detailed data statistics illuminate issues with quality, completeness and throughput.

4Data Policies and Standards: First Line of Defense

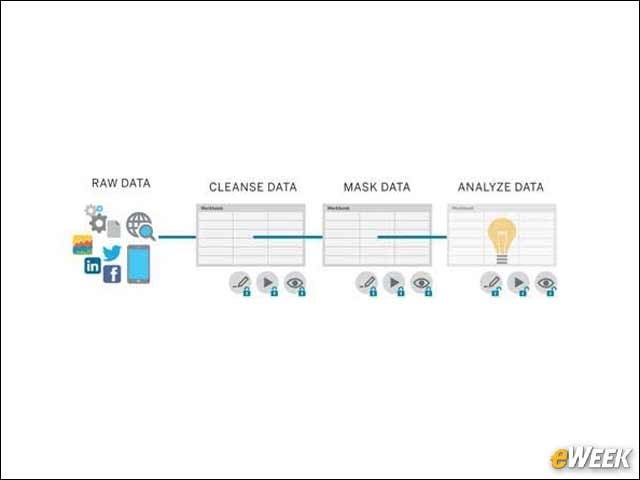

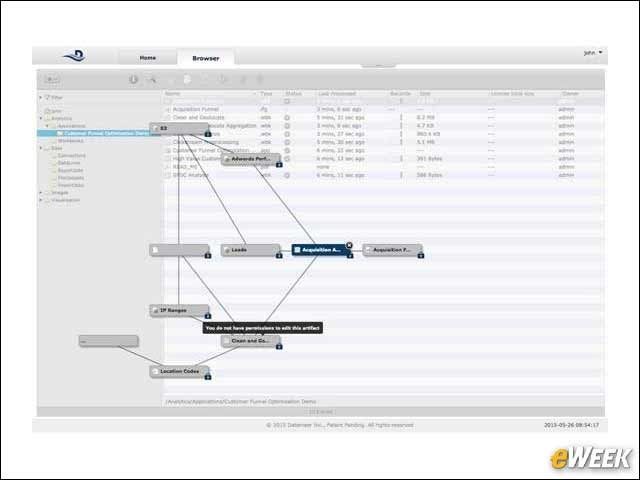

Data access policies are the first line of defense against risk for businesses. For IT, the goal is to implement policies that allow them to manage risk appropriately while still meeting business needs. Secure data views enable administrators and privileged users to expose a subset of fields to specific groups of users, and apply masking and anonymization to sensitive data fields. This ensures all users are always working from a single standard source of truth.

5Data Policies and Standards: Multi-stage Analytics

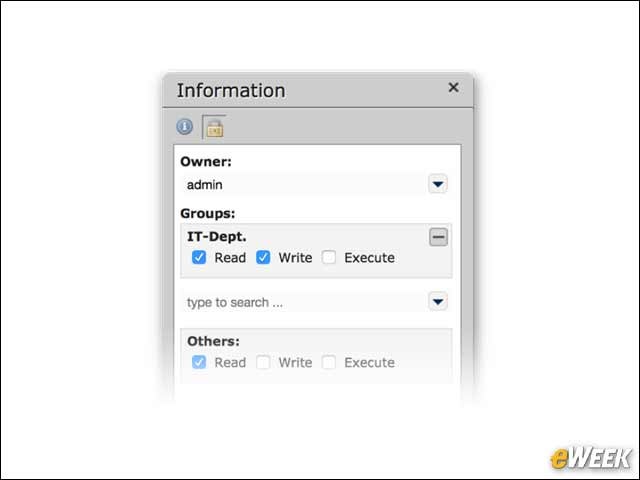

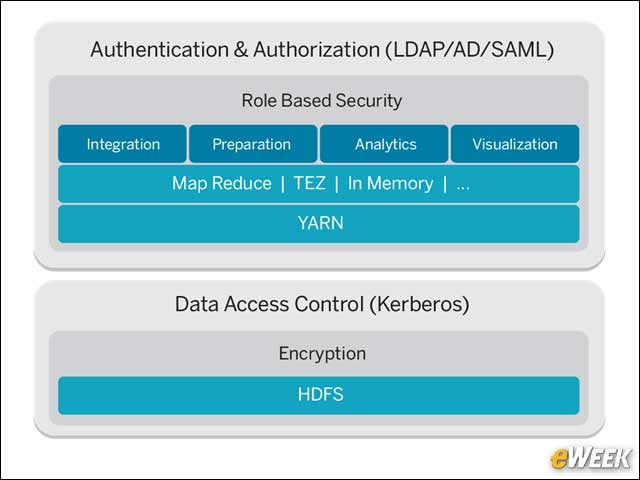

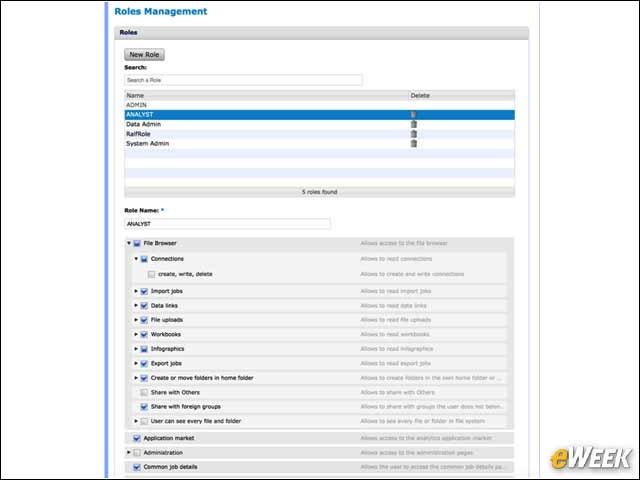

6Data Security and Privacy: Fine-Grained Access Control

True big data security needs to exceed that of the Hadoop Distributed File System’s built-in capabilities. Fine-grained access control is important, both at the row and column level, and any added metadata needs to carry with it the same level of security. Integration with enterprise identity management systems like Active Directory/LDAP should be a given. Role-based controls on downloading or exporting data and accessing administrative functions are mission-critical.

7Data Security and Privacy: Role-Based Access Control

8Regulatory Compliance: Data Lineage

9Regulatory Compliance: Audit Logs

Audit logs capture every login, change of permissions and other privileged actions that might involve sensitive data, including a User Action Log, a log file with relevant user and systems events and information; a Security Audit Log, a dedicated security audit log that captures relevant actions for security investigations and audits; a software development kit (SDK), which allows external systems to be apprised of user and system audit events as they happen; and Audit Reports, pre-built reports that aggregate, analyze and visualize log data in the form of a Datameer application called “HUM” (Health, Usage and Monitoring).

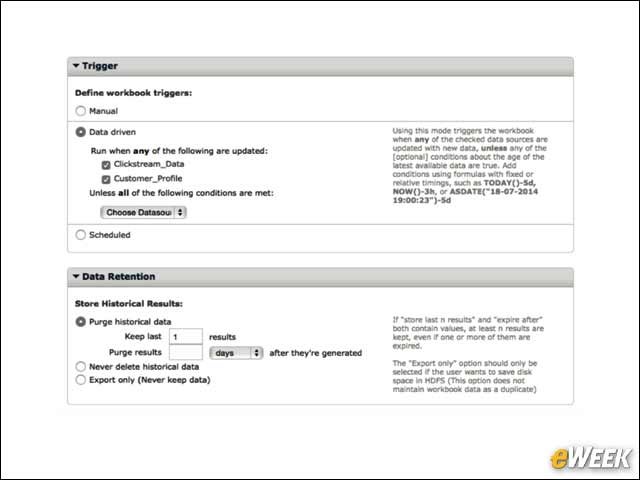

10Retention and Archiving

Users can manage their data retention policy with Datameer’s flexible data retirement rules. For each imported data set, an individual set of rules can be configured to keep data permanently or purge records that are older than a specific time window. Datameer’s security rules allow retired data to be instantly removed, retained until a specified time or manually removed after system administrator approval.

11Future-Proof Open Architecture

While the Hadoop ecosystem evolves and governance standards and technologies emerge, Datameer offers a pluggable architecture and open APIs so it is future-compatible as new systems and standards are introduced.