AI privacy concerns are growing as artificial intelligence becomes more widespread, raising issues about how data is collected, used, and protected. While AI can increase efficiency and improve workflows, it also introduces risks such as data security and illegal use. Many individuals are deeply troubled about having their information harvested to train AI models without their consent.

Understanding how AI uses data is essential for protecting customers and their personal information. This involves learning about common privacy risks, AI data collection methods, and best practices to mitigate these risks.

KEY TAKEAWAYS

- •Best practices for managing AI and privacy issues include developing strategic data governance and establishing the appropriate use of policies to secure a company’s data. (Jump to Section)

- •Given that major companies such as Microsoft, OpenAI, and Google are experiencing privacy issues related to AI, individual users and small businesses are also very likely to be affected. (Jump to Section)

- •The unauthorized use of confidential data and unregulated usage of biometric data are a few of the major issues with AI and privacy. (Jump to Section)

TABLE OF CONTENTS

5 Ways AI Can Collect and Use Your Data

The collection and use of personal data for AI has grown significantly. From social media interactions to smart gadgets, AI systems collect massive amounts of data to improve user experiences, personalize content, and drive targeted advertising. Clearly, this vast data collection poses substantial privacy risks. Understanding how AI collects and processes your data can help you navigate the digital landscape more securely and make better decisions about your online activity.

- Social Media and Internet Interaction: AI systems collect data about your interactions on social media platforms and websites, such as likes, shares, comments, time spent on a page, and link clicks. These interactions help AI understand your preferences and interests, allowing it to personalize information and advertising campaigns.

- Facial and Voice Recognition: AI employs facial and speech recognition algorithms to analyze images, videos, and voice recordings. This data allows AI to identify (to an extent) the apparent emotions of the individuals in those videos and the locations where they were produced.

- Machine Learning Algorithms: Machine learning algorithms use large volumes of data to predict behaviors and personalize experiences. By evaluating historical data, AI can predict your future behaviors or preferences and then personalize online content, advertisements, and other recommendations for your specific needs.

- Surveillance and Smart Devices: Surveillance and smart devices collect sensor data such as location and activity information. GPS and other location-tracking technologies watch user movements, while smart home gadgets that automate chores track daily habits and routines.

- Online Tracking and Cookies: Websites use cookies and tracking technologies to track user browsing history and targeted ads. Cookies track website visits, allowing AI to better understand online behavior and serve targeted ads based on user browsing history.

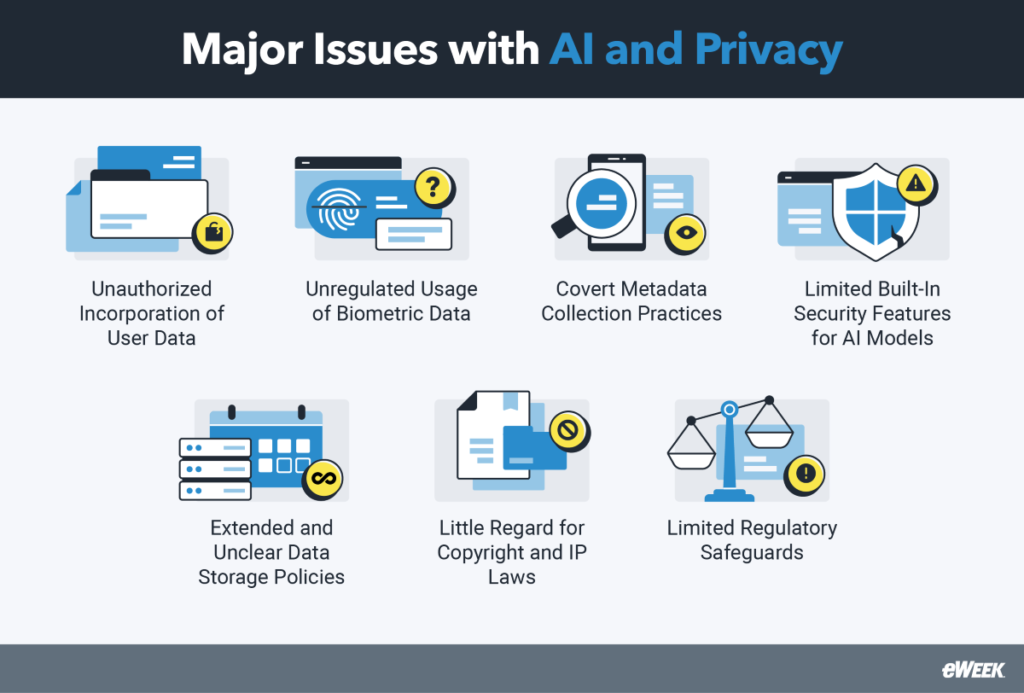

Major Issues with AI and Privacy

Given that the role of artificial intelligence has grown so rapidly, it’s not surprising that issues like unauthorized incorporation of user data, unclear data policies, and limited regulatory safeguards have created significant issues with AI and privacy.

Unauthorized Incorporation of User Data

When users of AI models input their own data in the form of queries, it’s possible that this data will become part of the model’s future training dataset. When this happens, this data can show up as outputs for other users’ queries, which is a particularly concerning issue if users have input sensitive data into the system.

Unregulated Usage of Biometric Data

Many personal devices use facial recognition, fingerprints, voice recognition, and other biometric data security instead of more traditional forms of identity verification. Public surveillance devices are also beginning to use AI to scan for biometric data to identify individuals quickly. Many individuals are unaware that their biometric data is actively collected, much less that it is stored and used for other purposes.

Covert Metadata Collection Practices

When a user interacts with an ad, a TikTok or other social media video, or most any major web property, metadata from that interaction—as well as the person’s search history and interests—can be stored for more precise content targeting in the future. While most user sites have policies that mention these data collection practices and/or require users to opt in, it’s mentioned only briefly and amid other policy texts, so most users don’t realize the guidelines to which they’ve agreed.

Limited Built-In Security Features for AI Models

While some AI vendors may choose to build baseline cybersecurity features and protections into their models, many AI models do not have native cybersecurity safeguards in place. Even AI technologies with basic safeguards rarely come with comprehensive cybersecurity protections. This is because creating a safer and more secure model can cost AI developers significantly in terms of time to market and overall development budget.

Extended and Unclear Data Storage Policies

Many AI vendors lack clarity about how long, where, and why they preserve user data, whether they’re keeping it for an extended period or using it in ways that do not prioritize privacy. OpenAI’s privacy policy, for example, permits various vendors, such as cloud and online analytics providers, to access and handle user data as requested. It also includes unidentified parties under a broad “among others” category, raising data treatment concerns. While OpenAI allows users to access, remove, or restrict data processing, privacy restrictions are limited, particularly for Free and Plus plan users, leaving certain data usage practices unclear.

Lack of Regard for Copyright and IP Laws

AI models frequently employ training data gathered from across the internet, which may contain copyrighted content, and some AI companies either ignore or neglect copyright and intellectual property rules in the process. AI image generation platforms such as Stability AI, Midjourney, DeviantArt, and Runway AI have faced legal issues for harvesting copyrighted images from the web without the authorization of the original creators. Some providers claim that existing regulations do not expressly prohibit this method for AI training. As AI models improve, prohibiting the use of copyrighted content may become more common, making it increasingly difficult for developers to trace the origins and licenses of the data their models use.

Limited Regulatory Safeguards

Some countries and regulatory bodies are working on AI regulations and safe use policies, but there are no overarching official standards to hold AI vendors accountable for how they build and use artificial intelligence tools. The most privacy-centric regulation is the EU AI Act, which entered into force on August 1, 2024. Some aspects of the law will take as long as three years to become enforceable. With such limited regulation, some AI vendors have come under fire for IP violations and opaque training and data collection processes, but little has come from these allegations. In most cases, AI vendors decide their own data storage, cybersecurity, and user rules without interference.

How Data Collection Creates AI Privacy Issues

Unfortunately, the total number and variety of ways that data is collected all but ensures that it will find its way into some irresponsible uses. From Web scraping to biometric technology to IoT sensors, modern life is essentially lived in the service of data collection efforts.

- Web Scraping Harvests a Wide Net: Content is scraped from publicly available sources on the internet, including third-party websites, wikis, digital libraries, and more. User metadata has also increasingly been pulled from marketing and advertising datasets and websites, including data about targeted audiences and what they engage with most.

- User Queries in AI Models Retain Data: When a user inputs a question or other data into an AI model, most AI models store that data for at least a few days. While that data may never be used for anything else, many artificial intelligence tools collect and store it for future training purposes.

- Biometric Technology Can Be Intrusive: Surveillance equipment—including security cameras, facial and fingerprint scanners, and microphones—can all be used to collect biometric data and identify individuals without their knowledge or consent. Companies can collect, store, and use this data without asking customers for permission.

- IoT Sensors and Devices Are Always On: Internet of Things (IoT) sensors and edge computing systems collect massive amounts of moment-by-moment data and process that data nearby to complete larger and quicker computational tasks. AI software often takes advantage of an IoT system’s detailed database and collects its relevant data through methods like data learning, data ingestion, secure IoT protocols and gateways, and APIs.

- APIs Interface With Many Applications: APIs provide users with an interface for business software so they can easily collect and integrate different types of data for AI analysis and training. With the correct API and setup, users can collect data from CRMs, databases, data warehouses, and both cloud-based and on-premises systems.

- Public Records Are Easy Accessed: Whether records are digitized or not, public records are often collected and incorporated into AI training sets. Information about public companies, current and historical events, criminal records, immigration documents, and other public information can be collected without prior authorization.

- User Surveys Drive Personalization: Though this data collection method is old-fashioned, surveys and questionnaires remain a tried-and-true method used by AI vendors to collect data from users. Users may answer questions about what content they’re most interested in, what they need help with, or any other question that gives the AI a more accurate method of personalizing interactions.

Emerging Trends in AI and Privacy

Because the AI landscape is evolving so rapidly, the emerging trends shaping AI and privacy issues are also changing at a remarkable pace. Among the leading trends are major advances in AI technology itself, the rise of regulations, and the role of public opinion in AI’s growth.

Advancements in AI Technologies

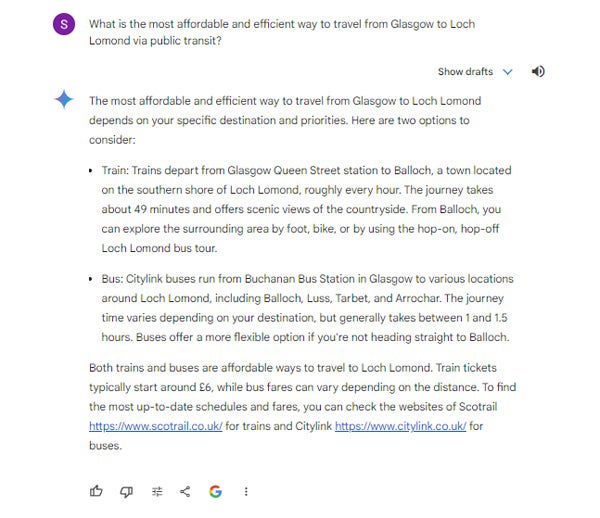

AI software has exploded in terms of technological sophistication, use cases, and public interest and knowledge. This growth has happened with more traditional AI, machine learning technologies, and generative AI. Generative AI’s large language models (LLMs) and other massive-scale AI technologies are trained on incredibly large datasets, including internet data and also more private or proprietary datasets. While the data collection and training methodologies have improved, AI vendors and their models are often not transparent in their training or the algorithmic processes they use to generate answers.

Many generative AI companies have updated their privacy policies and data collection and storage standards to address this issue. Others, such as Anthropic and Google, have worked to develop and release detailed research that illustrates how they are working to incorporate more explainable AI practices into their AI models, which improves transparency and ethical data usage.

Impact of AI on Privacy Laws and Regulations

Most privacy laws and regulations do not yet directly address AI and how it can be used or how data can be used in AI models. As a result, AI companies have had enormous freedom to do what they want. This lack of regulation has led to ethical dilemmas like stolen IP, deepfakes, sensitive data exposed in breaches or training datasets, and AI models that seem to act on hidden biases or malicious intent.

More regulatory bodies—both governmental and industry-specific—are recognizing the threat AI poses and developing privacy laws and regulations that directly address AI issues. Expect more regional, industry-specific, and company-specific regulations to come into play in the coming months and years, with many of them following the EU AI Act as a blueprint for protecting consumer privacy.

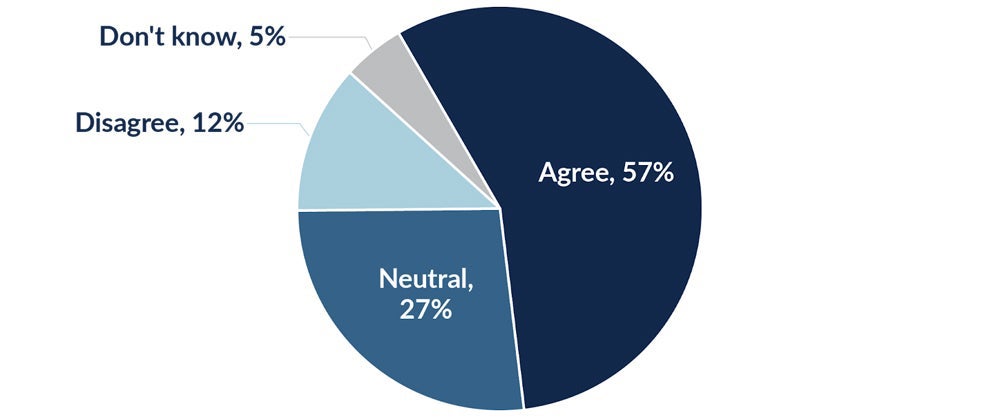

Public Perception and Awareness of AI Privacy Issues

Since ChatGPT was released, the general public has developed a basic knowledge of and interest in AI technologies. Despite the excitement, the general public’s perception of AI technology is fearful, especially as it relates to AI privacy. Many consumers do not trust the motivations of big AI and tech companies and worry that their personal data and privacy will be compromised by the technology. Frequent mergers, acquisitions, and partnerships in this space can lead to emerging monopolies and the fear of the power these organizations have.

According to a survey completed by the International Association of Privacy Professionals in 2023, 57 percent of consumers fear that AI is a significant threat to their privacy, while 27 percent felt neutral about AI and privacy issues. Only 12 percent disagreed that AI would significantly harm their personal privacy.

Real-World Examples of AI and Privacy Issues

While several significant and highly publicized security breaches involving AI technology have occurred, many vendors and industries are making important strides toward better data protection. The following examples cover both failures and successes.

High-Profile Privacy Issues Involving AI

The following are some of the most significant breaches and privacy violations that directly involved AI technology over the past several years:

- Microsoft: Microsoft’s recent announcement of the Recall feature, which allows business leaders to collect, save, and review user-activity screenshots from their devices, received significant pushback for its lack of privacy design elements, as well as for the company’s recent problems with security breaches. Microsoft will now let users more easily opt in or out of the process, and it plans to improve data protection with just-in-time decryption and encrypted search index databases.

- OpenAI: OpenAI experienced its first major outage in March 2023 due to a bug that exposed certain users’ chat history, payment, and other personal data to unauthorized users.

- Google: A former Google employee stole AI trade secrets and data to share with the People’s Republic of China. While this does not necessarily impact personal data privacy, it’s concerning that tech company employees would have this level of access to highly sensitive data.

Successful Implementations of AI with Strong Privacy Protections

Many AI companies are innovating to create privacy-by-design AI technologies that benefit both businesses and consumers, including the following:

- Anthropic: Anthropic has continued to develop what it calls its constitutional AI approach, which enhances model safety and transparency. The company also follows a responsible scaling policy to regularly test and share with the public how its models are performing against biological, cyber, and other important security metrics.

- MOSTLY AI: MOSTLY AI is one of several AI vendors that has developed comprehensive technology for synthetic data generation, which protects original data from unnecessary use and exposure. The technology works especially well for responsible AI and ML development, data sharing, testing, and quality assurance.

- Glean: One of the most popular AI enterprise search solutions on the market, Glean was designed with security and privacy at its core. Its features include zero trust security, user authentication, the principle of least privilege, GDPR compliance, and data encryption at rest and in transit.

- Hippocratic AI: Hippocratic AI is a generative AI application designed for healthcare services. It complies with HIPAA and has been reviewed extensively by nurses, physicians, health systems, and payor partners to ensure data privacy is protected and patient data is used ethically in the service of patient care.

- Simplifai: Simplifai is a solution for AI-supported insurance claims and document processing. It explicitly follows a privacy-by-design approach to protect its customers’ sensitive financial data. Its privacy practices include data masking, limited storage times, regular data deletion, a built-in platform, network and data security components, and data encryption.

AI Privacy and Consumer Rights

As AI continues to integrate into more facets of our lives, concerns about privacy and consumer rights have grown significantly. The large volumes of data required to train and operate AI systems raise serious concerns about how this information is acquired, used, and secured. Transparency, informed consent, and data protection are necessary to sustain customer trust and privacy standards.

Customers have special rights over their data, such as the ability to access, update, delete, and object to its processing. Global regulatory measures aim to reconcile the revolutionary promise of AI with the necessity to preserve human privacy and consumer rights.

AI Privacy

AI systems rely on large volumes of data, including personal information, to perform optimally. These systems’ constant need for more data raises a number of key considerations concerning data collection, use, and protection:

- Data Collection and Usage: AI systems sometimes require large volumes of data, which may involve personal information. This use of sensitive data raises questions regarding how data is gathered, stored, and used.

- Transparency: It is often challenging for consumers to understand what elements of their data are collected and used. Companies should be transparent about their data practices to their customers.

- Consent: The core practice of AI privacy is obtaining consumers’ informed consent before collecting and using their data.

- Data Security: Ensuring the level of data security that keeps user information safe from breaches and illegal access is essential to maintaining consumer trust.

Consumer Rights

The growth of AI has made consumer rights more urgent. With AI systems using personal data, users must maintain control over their information. Key rights include the following:

- Right to Access: Consumers should have the right to learn what information is being collected about them and how it is used.

- Right to Correction: Customers should be able to address any inaccuracies in their information.

- Right to Deletion: Consumers should be entitled to request that their data be deleted, also known as the “right to be forgotten.”

- Right to Object: Consumers should be able to object to the use of personal data for specific purposes, such as marketing.

Best Practices for Managing AI and Privacy Issues

While AI presents an array of challenging privacy issues, companies can overcome these concerns by using the following best practices for data governance, establishing appropriate data use policies, and educating all stakeholders:

- Invest in Data Governance and Security Tools: Some of the best solutions for protecting AI tools and the rest of your attack surface include extended detection and response (XDR), data loss prevention, and threat intelligence and monitoring software.

- Establish an Appropriate Use Policy for AI: Managers and staff should know what data they can use and how they should use it when engaging with AI tools.

- Read the Fine Print: All users should read the AI governance documentation carefully to identify any red flags. If they’re not sure about something or if it’s unclear in their policy docs, they should contact a representative for clarification.

- Use Only Non-Sensitive Data: For any AI use case that requires using sensitive data, businesses must find a way to safely complete the operation with digital twins, data anonymization, or synthetic data.

- Educate Stakeholders and Users on Privacy: Depending on their level of access, your organization’s stakeholders and employees should receive general and specialist training on data protection and privacy.

- Enhance Built-In Security Features: To improve your model’s security features, focus heavily on data security, increasing practices like data masking, data anonymization, and synthetic data usage; also consider investing in comprehensive AI cybersecurity tool sets for protection.

- Proactively Implement Stricter Regulatory Measures: Set and enforce clear data usage policies, provide avenues for users to share feedback and concerns, and consider how AI and the necessary training data can be used without compromising industry-specific regulations or consumer expectations.

- Improve Transparency in Data Usage: Being transparent about data usage gives customers greater confidence when using your tools and also provides the necessary information that AI vendors need to pass a data and compliance audit.

- Reduce Data Storage Periods: Reducing data storage periods to the exact amount of time necessary for training and quality assurance helps protect data against unauthorized access. It also gives consumers greater peace of mind when they learn this reduced data storage policy is in place.

- Ensure Compliance with Copyright and IP Laws: While current regulations for how AI can incorporate copyrighted content and other intellectual property are murky at best, AI vendors will improve their reputation (and be better prepared for impending regulations) if they regulate and monitor their content sources from the outset.

3 Leading AI Privacy Tools and Technologies

AI privacy solutions and technologies assist businesses in safeguarding sensitive data from potential vulnerabilities and threats. We recommend three tools that perform penetration testing, enforce policies, monitor, and provide strong data protection: Nightfall AI, Private AI, and Troj.AI.

Nightfall AI

Nightfall AI provides a complete, AI-native data security platform that protects sensitive data across many settings, including SaaS apps, GenAI tools, email, and endpoints. Its software employs advanced AI algorithms to identify and secure passwords, personally identifiable information (PII), and protected health information (PHI) with high precision. Nightfall is fully integrated with enterprise applications, offering real-time monitoring and policy enforcement to avoid data leaks and demonstrate compliance. Nightfall AI’s pricing depends on how much data a company needs to secure. The company offers a free plan allowing users to scan 30 GB of data monthly.

Private AI

Private AI focuses on privacy-preserving technology, including its AI-powered redaction service. This service detects and eliminates sensitive material from text, images, and other data types, guaranteeing compliance with privacy laws such as GDPR and CCPA. Its solutions integrate into existing workflows, delivering powerful data protection without compromising usability or efficiency. Private AI offers free API keys for users to test their services, but they are limited to 75 API calls per day. The company’s paid plan pricing is available upon request.

TrojAI

TrojAI specializes in protecting AI and machine learning systems from attacks. Its software offers comprehensive security through transformation, model monitoring, and real-time threat detection. TrojAI provides penetration testing for AI models, a firewall for AI applications, and compliance features to help protect against data poisoning and model evasion assaults. Its technologies are designed to improve the security and dependability of AI systems in enterprise environments. Troj pricing is available upon request; each business is different and will require personalized quotations.

Bottom Line: Addressing AI and Privacy Issues Is Essential for Consumer Trust

AI tools present businesses and consumers with many new conveniences, from task automation and guided Q&A to product design and programming. While these tools can improve users’ lives, they also risk violating individual privacy in ways that damage vendor reputation and consumer trust, cybersecurity, and regulatory compliance efforts.

Using AI responsibly to protect user privacy requires extra effort, yet it’s essential when you consider how privacy violations can impact a company’s public image. Especially as this technology matures and becomes more pervasive in users’ lives, it’s essential to follow AI regulations and develop specific AI best practices that align with your organization’s culture and customers’ privacy expectations.

For more information on how to address these AI issues, read our article on AI and ethics.